RSA statistics showed young and new male drivers had an accident rate 5 to 10 times higher than that of the next highest group.For my Interactive Media master’s thesis I wanted to find ways to mitigate this worrying trend by examining the issue from the learner driver’s perspective. This idea delved into the real-time visual, audio and tactile response of novice drivers, providing insights into their attention patterns and overall situational awareness.I received a distinction for the project for its innovation and execution.

As the final project in my MSc degree from University of Limerick, we could propose a project to design a solution to any market we wished.

As a close friend of mine was going through their driving lessons at the time and had a lot of opinions on road safety to share on the process, this inspired me to take a closer look at the system through the lens of product development.

As this was a masters project, I was placed under strict limitations for my deadlines, budgets and ethics considerations.

I want to gauge a learner driver’s ability

then test a way to improve the result

which will easily transfer to a real-world environment.

I also need to accomplish this without using an actual car, due to (sensible) university ethics guidelines.

By constructing a practical driving simulator as accurately as possible, I can safely test inexperienced drivers on their abilities.

My research found Observation and Safety Checks are one of the highest points of failure in driving exams. These would therefore be the most valuable aspects to address.

If I can measure their observation skills objectively, I can think of a way to offset that mental load the same way a GPS offloads the need to think about navigation.

Based on my three hypotheses, I built a basic driver simulation rig. My core concept was to use an eye-tracking camera to measure mirror responsiveness, introduce separate small sensory reminders (touch, sight and sound), and test if they improved mirror response rates.

Some basic programming allowed communication between XML, Python and the arduino’s C++.

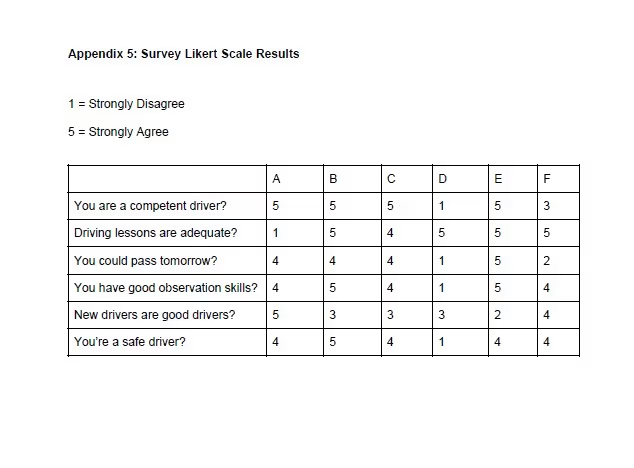

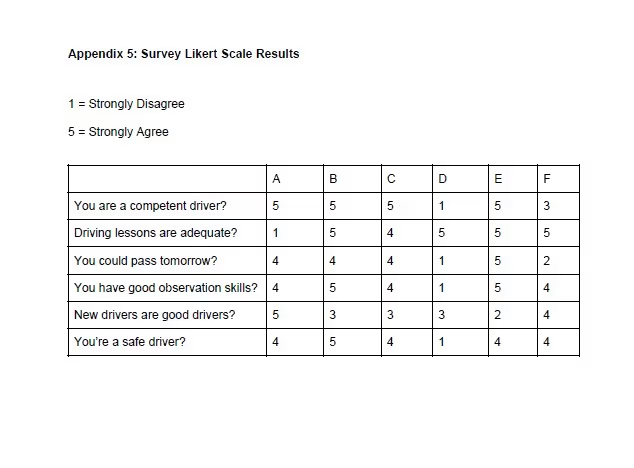

By analysing eye movement, observational data, and pre- and post-activity surveys, I aimed to identify common deficiencies and strengths in learner drivers’ observation, ultimately contributing to the development of more effective driver education programs.

The overall consensus on the device was that felt the different forms of sensory feedback in different tests were simultaneously too intrusive (“It’s really distracting”), and then became completely ignorable as the brain started to filter out the extra signals (“I didn’t even notice. Were there supposed to be any vibrations?”).

The simulation worked as well as I had intended, but without proper in-car testing, would always feel more like a game with no consequences.

Overall, my 3rd theory (notifying drivers of their observations improves responsiveness) only increased users’ anxiety. Users also felt the form factor of the devices were unappealing and bulky, and it was intrusive of their idea of the car as a “safe space” where they were in control.

If I'd developed a phone app instead, or a system that returned the results once a drive had finished, I could have encouraged a more active effort on part of the user, and gather more reliable real-world data.

I would have loved to take this approach for a second round of development, had I more time to pursue it.